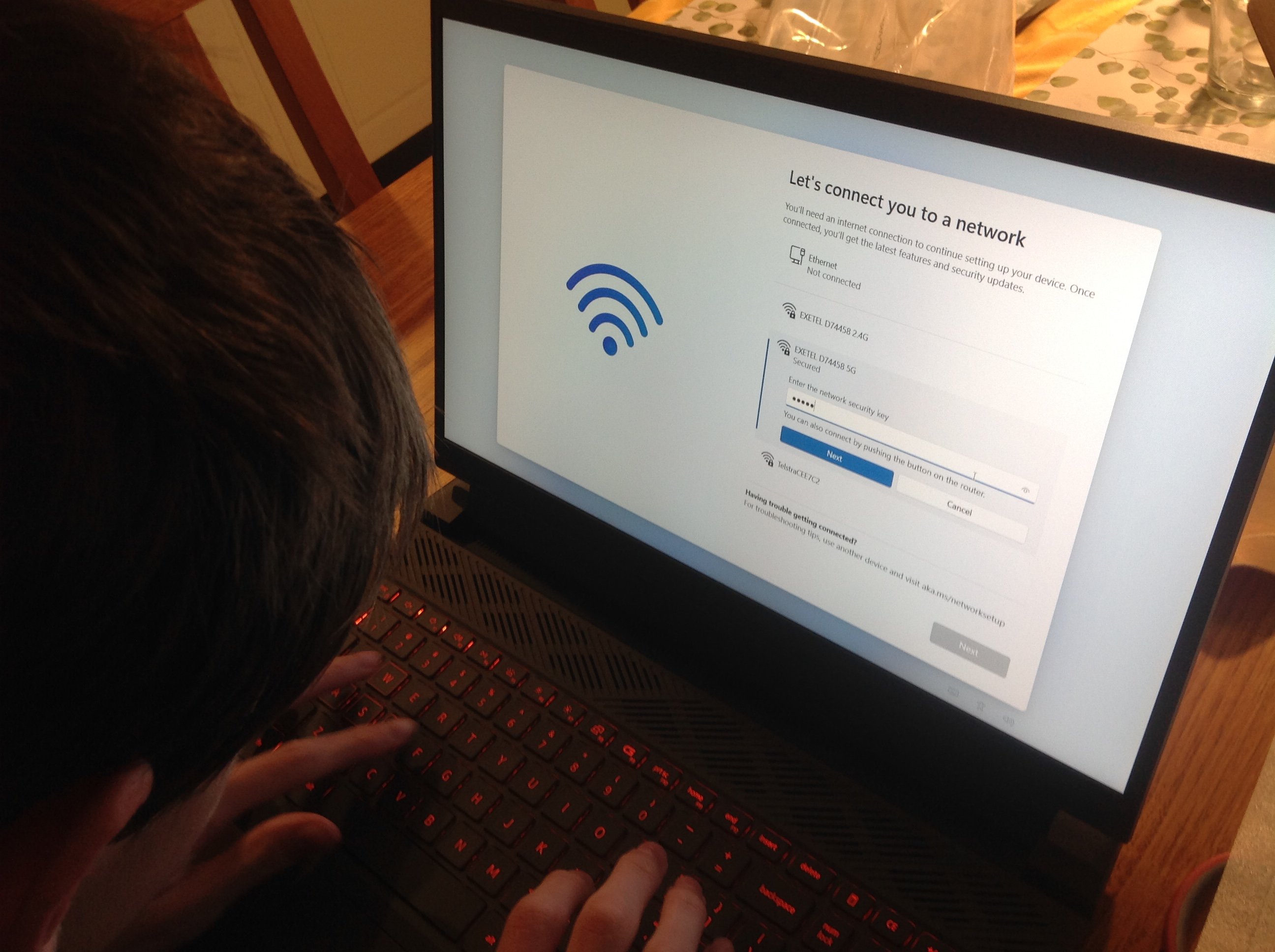

Photograph Source: THOMAS KLIKAUER

One might see human-to-human morality as “morality 1.0”, and AI-to-human morality as “morality 2.0”. AI morality is fundamentally different to human-to-human morality. Our human-centered morality started when early human tribescreated functional communities that demanded codes of conduct shaping human-to-human relations hundreds of thousands of years ago.

Move fast forward to today and when viewed from all we know about the current stage of artificial intelligence (AI), it is extremely unlikely that we will end up in “2001 Space Odyssey” situation.

In Kubrick’s movie, a computer called “HAL” responds to the human command of, “open the pod bay doors”, with “I’m afraid I can’t do that, Dave”. In true – and very fictional – Hollywood style, the computer’s rejection of the human command signified the domination of computers over humans. Yet, Stanley Kubrick highlights a typical human-machine dilemma.

As recently discussed by Elon Musk, a concept of futuristic AI originally by Ray Kurzweil – suggested that a machinery called super intelligence will emerge. This is known as ASI or artificial super intelligence. and also known as HLMI or Human Level Machine Intelligence – roughly defined as a machine intelligence that outperforms humans on all intellectual tasks.

In this concoction, ASI is set to take over humanity – for which ASI will master over us rather than the other way around. Alternatively, ASI might view humans as we see insects – and we all know what humans do to bugs when they overstep their boundaries!

Despite Kurzweil’s idea and the recently much hyped up fear, this is not set to happen in the near future. However, one of the so-called AI-godfathers – Geoffrey Hinton – recently noted, right now, they’re not more intelligent than us, as far as I can tell. But I think they soon may be.

Part of the apocalyptic scenarios, AI is set to become a technological singularity. This marks the moment in history at which exponential AI progress brings a dramatic change. AI people call this the intelligence explosion. This transformation will be so rapid and severe that we can no longer comprehend it.

Worse, it is set to become self-sustainable which means AI will exists independent of human beings. In short, ultra-intelligent machine will design ever better machines in a self-driven process – without needing us. It is a bit like a speeding train that one day it will start building its own tracks.

The aforementioned Ray Kurzweil remains one of the key proponents of singularity. He projected that improvements in AI, computers, genetics, nanotechnology, and robotics, will reach a final point – the singularity.

At this point, machine intelligence will be more powerful than all human intelligence combined. And, so the predictiongoes, human and machine intelligence will merge into trans-humanism promising eternal life. The formula is:

½ machine + ½ human = eternal life

On the way to all this, the most immediate step is the move from Kurzweil’s AI singularity to AGI or artificial general intelligence. This is when our currently very specific AIs mutate into general-purpose AI.

As “general” AI, AGI no longer carries out a single task like simply guiding your car etc., but AGI can do many different general things. This, so the hope or fear is, that AGI’s intelligence matches – and perhaps even exceeds – the intelligence of humans.

This is, according to some AI experts, our future. What is more real and more current is that AI is not much more than statistics-based learning machine furnished with a powerful algorithm. AI experts believe that once AI can learn from experience – as we can and do – so-called deep learning takes place.

What is currently called AI is nothing but statistical algorithm that works itself through massive amount of data, statistically analyzing them, and then issues the most likely course. Today’s AI is simply a turbo-charged probability theory. It is Bayes on steroids. This is sold as “intelligence” or AI.

This may well change in the future. Perhaps that future moment comes when some

Whether such a futuristic scenario ever comes to pass or not, much of this still has moral implications.

Having outlined all this, moral philosophy argues that human beings and their minds are fundamentally different from machines. As AI-expert Hinton says, the kind of intelligence we’re developing is very different from the intelligence we have … we’re biological systems and these are digital systems.

Unlike machines, human beings focus on conscious and – perhaps more importantly – we are self-conscious. Even more important is the fact that conscious life should not be reduced to a formal description of life. Instead, it is linked to morality and what moral philosophy calls, what it means to be human.

Moral philosophy tends to argue against the idea that computer programs – whether AI or not – will ever become conscious. And, whether or not the computers, software, and even AI will ever have a genuine cognitive state of existence. These machines will never understand “meaning”. So far, moral philosophers are right on the mark. Until today, computer programs can produce output based on input – and not much more.

Even AI-programs have a specific set of rules – called an algorithm – and these rules are given to computer programs. From the standpoint of moral philosophy, computers, simple programs, and even AI do not “understand” anything.

For moral philosophy, it remains imperative to realize that even AI computer programs lack key human faculties such as, for example, intentionality – a concept so vital for Kantian moral philosophy.

Moral philosophy also argues that there are crucial differences between how humans and computers think. Unlike AI, human beings are meaning-making beings, and they are conscious and embodied living beings whose nature, mind, and knowledge cannot be explained away by comparisons to machines.

Yet, in philosophical terms, AI still teaches us something about how humans think. It also teaches us something about a particular kind of human thinking and that is a thinking that cannot be formalized with mathematics – a “thinking” purely based on algorithmic control and manipulation.

Quite apart from the philosophical human-machine debate, the very existence of AI has already led to moral – and immoral – consequences. One of the interesting questions on AI is that of whether AI has a what philosophy calls ethical agency itself.

Such philosophical questions are no longer science fiction. Today, human beings have already handed over some of our decisions to AI algorithms. In cars, they tell us the way. In courtrooms and police stations, they reach decisions that “can” be morally problematic.

In short, AI algorithms have been given some sort of agency in the sense that they do things in the real world. These actions do have moral consequences. This is highly relevant, for example, for the moral philosophy of consequentialismthat argues that if the outcome or consequence of an action is good, it is moral. This means AI can be morally good.

AI’s morality can also be seen as a version of functional morality. This means that AI would need the ability to evaluate the moral consequences of its own actions – something that is currently lacking.

Once this can be achieved – if it ever can be achieved – this might also mean that AI could be able to create a superior form of morality. And this could occur with AGI – but definitely ASI, which will be more intelligent than us and hence more moral. That is the hope, at least.

In any case, such a future form of AI morality would also depends on what moral philosophers call, a sufficient level of interactivity, autonomy, and adaptivity, and on being capable of morally qualifiable action. None of this can be said about AI today. Perhaps AI morality truly comes to life when AI meets three basic conditions:

1) AI is truly autonomous when it can act independent from its human programmer;

2) when AI can explain its own behavior by ascribing moral intentions to its actions. i.e. moral ideas like the intention to do good or harm; and finally,

3) when AI starts behaving in a way that shows an understanding of its own moral responsibilities to other moral agents like human beings.

This could very well mean that what moral philosophy sees as moral agency is no longer connected to our concept of humanness – the philosophical idea of personhood.

Currently, moral philosophy is still correct when arguing that we do not owe anything to machines. We can still see AIand robots as tools, as property. Up until today, we have no moral obligations to them.

For human beings, it is, to a large part, the moral intuitions and moral experiences that tells us that there might be something wrong with, for example, mistreating someone. And this might even be true for AI even when AI does not have human-like or animal-like properties such as consciousness and sentience. For example, shooting a dog breaches our moral duty towards the dog.

But – and this is a key moral philosophical argument – a dog-shooting person damages the humane qualities in himself. This is what we ought to exercise in virtue of our moral duty to animals and mankind.

In other words, when we damage ourselves, we become less human. One becomes less human when shooting or even mistreating a dog – as moral philosopher Kant argues. And we may even become less human by mistreating or killing the AI – in some distant future.

In addition, if we treat our dog kindly, so moral philosophy argues, this may not be because we engage in moral reasoning about our dog. Instead, it is because we already have a kind of social relation with our dog.

The dog is already a pet and a companion before we do the philosophical work of ascribing moral status. At some point, AI may be able to function in a similar way as shown rather insightfully in the example in the AI mistress.

In the end, reflecting on the morality of AI does not only teach us something about AI. It also teaches us something about ourselves. And finally, it also teaches us about how we think and how we actually do, should, and ought to relate to the non-human environment including animals, plants, and even artificial intelligence.